Architecture & hardware guide#

xcore is a multicore microprocessor which enables highly flexible and responsive I/O, whilst delivering high performance for applications. The architecture enables a programming model where many simple tasks run concurrently and communicate using hardware support. Multiple xcore processors can be interconnected, and tasks running on separate physical processors can communicate seamlessly. To utilise the platform effectively it is helpful to understand the hardware model; the purpose of this document is to provide an overview of the platform and its features, and an introduction to utilising them using the C programming language.

Overview#

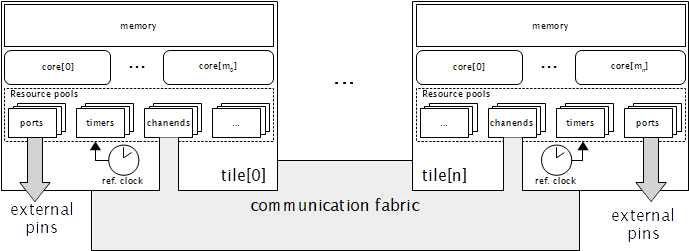

The xcore architecture facilitates scaling of applications over multiple physical packages in order to provide high performance as well as low-latency I/O. A complete xcore application targets a set of xcores and communication between them uses features of the xcore hardware (see below).

An xcore network is made up of one or more device packages; these are connected by the xCONNECT interconnect to allow high-speed, hardware-assisted communication.

Each package contains one or more nodes

Each node contains one or more tiles plus interconnect which allows communication within and between tiles.

Each tile contains one or more logical cores, some memory, a reference clock, and a variety of resources.

Each logical core is a hardware thread - it shares the tile’s memory and resources with other cores, but each logical core has its own register set and can operate independently of the others.

There are several resources types which exist on each tile. They can be claimed by a logical core, used and released for use by the same or another logical core. chanend resources are used for form channels for commication between logical cores. timer resources provide a logical core with a timestamp or to a facility to wait for a time period, based on the reference clock. Port and clock block resources facilitate flexible GPIO.

Nodes#

Each physical package typically contains one node but may contain more. Multiple packages may be connected using xLINKs, to provide a multi-node system. A node typically contains two tiles.

Tiles#

Tiles are individual and independent processing units contained within nodes; each tile has its own memory, I/O subsystem, clock divider and other resources. Tiles within a node communicate using the communication fabric contained within that node, and can communicate with tiles in other packages using xLINKs.

Logical cores#

Each tile has eight logical cores. Each logical core has its own registers and executes instructions independently of the other logical cores. However, all logical cores within a tile share access to that tile’s resources and memory. The xcore pipeline has five stages and each stage takes one system clock cycle to complete. Almost every xcore instruction takes five cycles to execute using this pipeline. This makes it straight forward to calculate the duration of a straight-line instruction sequence. Five logical cores can operate in parallel, but staggered such that on a given clock cycle each will be using a different pipeline stage. These five will run independently and each gets one fifth of the MIPS (machine instructions per second) available to the entire tile. When more than five logical cores are active the relative rate of execution of each will drop to share the five pipeline stages between them. The xcore hardware scheduler uses round-robin to allocate each logical core a time slice.

A logical core may be put into a “paused state” if it is waiting for a resource (see below) to satisfy a specified condition (for example a timer reaching a required value). When a logical core is in the paused state it is removed from the list of logical core scheduled by the xcore hardware scheduler. Once the resource satisfies the required condition the logical core is put back on the list of logical cores to schedule.

I/O and pooled resources#

Resources are shared between all cores in a tile. Many types of resource are available, and the exact types and numbers of resources vary between devices. Resources are general-purpose peripherals which help to accelerate real-time tasks and efficient software implementations of higher-level peripherals e.g. UART. Many of the available resource types are described in later sections. Due to the diverse nature of resources, their interfaces vary somewhat. However, most resources have some or all of the following traits:

Pooled - the xcore tile maintains a pool of the resource type - a core can allocate a resource from the pool and free it when no longer required.

Input/Output - values can be read from and/or written to the resource (for example, the value of a group of external pins).

Event-raising - the resource can generate events when a condition occurs (on input resources, this will indicate that data is available to be read). Events can wake a core from a paused state.

Configurable triggers - some ‘event-raising’ resources can be configured to generate events under programmable conditions.

Though available resources vary, all tiles have a number of common resource types:

Ports - These provide input from and output to the physical pins attached to the tile. Ports are highly configurable and can automatically shift data in and out as well as generate events on reading certain values. As they have a fixed mapping to physical pins, ports are allocated explicitly (rather than from a pool), and have fixed widths which can be 1, 4, 8, 16 or 32 bits.

Clock Blocks - Configurable clocks for controlling the rate at which a port shifts in/out data. These can divide the reference clock or be driven by a single-bit port.

Timers - Provide a means of measuring time as well as generating events at fixed times in the future - this can be used to implement very precise delays.

Chanends - An endpoint for communicating over the network fabric. A chanend (short for ‘channel end’) can communicate with any other chanend in the network (whether on the same tile, or on a different physical node).

Communication fabric#

The communication fabric is a physical link between channel ends within a network, which allows any channel end to send data to any other channel end. When one channel end first sends data to another, a path through the network is established. This path persists until closed explicitly (usually as part of a transaction) and handles all traffic from the sender to the receiver during that time. Links are directed; so if channel end A sends data to channel end B, and then (without the link being closed) B sends data back to A, two links will be opened. These two links will not necessarily take the same route through the network. The communication capacity between channel end within a single node is always enough for at least two links to be open. Between nodes, the capacity depends on the number of physical links which are connected.